Methodology: How we substantiate performance and impact metrics

Our methodology is built around traceability, conservative assumptions and reproducible measurement protocols.

1) Evidence categories

All metrics and statements are assigned to one of the following evidence types:

Public benchmarks (external sources). Used for sector context, typical ranges and state-of-practice comparisons. These sources are linked directly next to the claim and listed in the references section.

Examples: industry adoption reports, EU studies, peer-reviewed research articles, standards and technical guidelines.

Measured results (pilot or operational data). Used for technical KPIs and operational performance (e.g., throughput, setup time, runtime frequency). Measured results are supported by a clear test protocol, measurement conditions and a definition of the metric.

Engineering estimates (planning assumptions). Used when a value is indicative and not directly measurable upfront (e.g., order-of-magnitude productivity loss due to downtime). Estimates are explicitly labelled as such, include assumptions and are updated once measured data becomes available.

2) Metric definitions (to avoid ambiguity)

Every metric is reported with a written definition and scope. For example:

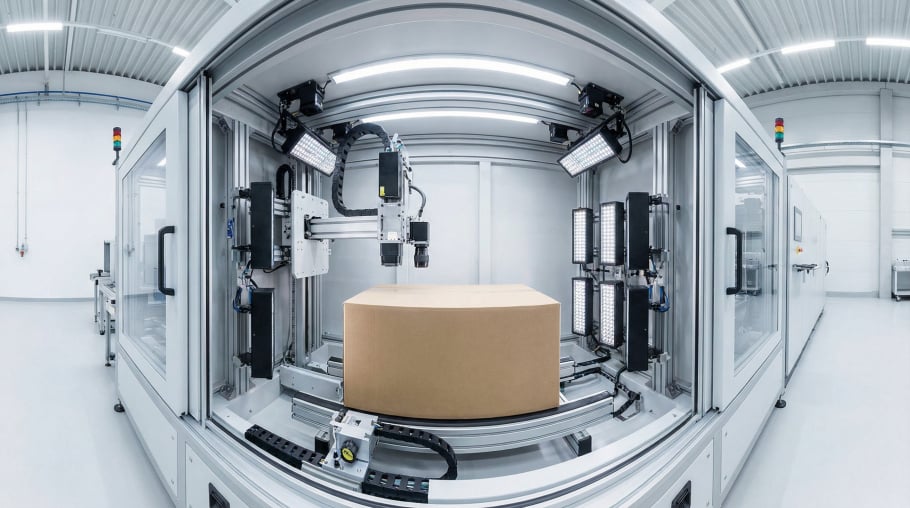

Setup / reconfiguration time is defined as the sum of the steps required to deploy or adapt a system, such as:

calibration procedures

workspace/scene configuration

end-effector parameterisation

component onboarding and validation run

Each step is timed separately so totals are reproducible and comparable.

Runtime performance (e.g., “≥1 Hz”) is defined as end-to-end processing frequency under the target deployment constraints. It is measured using system timestamps and profiling logs, not by theoretical compute estimates.

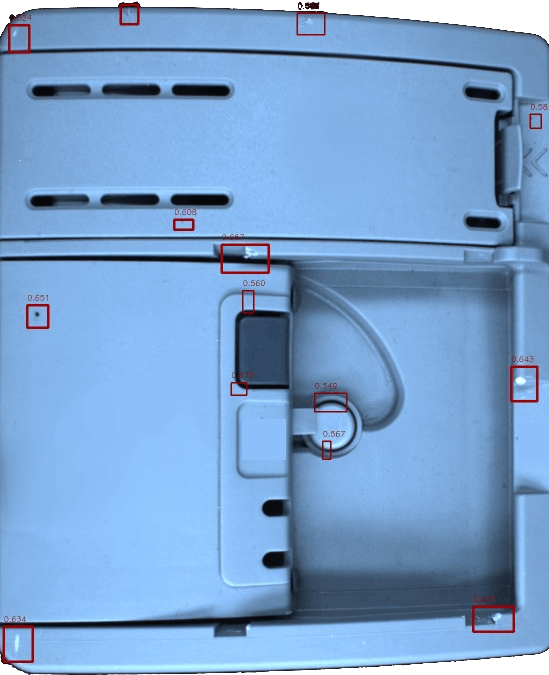

Perception quality (e.g., segmentation, detection) is reported with standard KPIs (e.g., IoU, precision/recall), plus robustness checks across varying lighting, motion and clutter conditions.

3) Measurement protocol and reproducibility

For any measured KPI, we apply a consistent protocol:

Test conditions are documented: hardware class, sensor configuration, runtime constraints and software version.

Repeatability is ensured: measurements are repeated across multiple runs and, where relevant, multiple change events.

Statistics are reported: at minimum, we publish the number of repetitions (n), median/mean and a range (min–max or standard deviation).

Edge cases are included: performance is tested under realistic disturbances (occlusion, conveyor motion, lighting changes) when applicable.

4) How we use linked sources correctly

External sources are used to establish context and baseline ranges, not to “prove” internal results. We apply the following rule:

If a linked source reports a metric in a comparable setting, we use it to justify plausibility and order-of-magnitude.

If a claim is a precise percentage or absolute number, we only present it if the linked source explicitly supports that exact figure.

Otherwise, we use qualitative wording (“a large share”, “commonly”, “often”) or a bounded range (“typically X–Y”) and keep the statement conservative.

5) How we derive productivity and downtime impacts

Claims about productivity impact due to reconfiguration or downtime are derived transparently from:

Downtime per change event (measured or conservatively estimated)

Change frequency (observed or documented in operational planning)

Available production time (shift pattern assumptions)

A typical calculation is expressed as a bounded range rather than a single point:

Indicative impact (%) = (downtime per week) / (available production time per week)

If the input values are estimates, the output is presented as an order-of-magnitude range and updated once measurement data is available.

6) Transparency and updates

Wherever feasible, we provide:

direct links to public sources

a short “how measured” note for internal KPIs

a clear distinction between measured values and estimates

As additional measurements become available, estimates are replaced by measured results while retaining traceability (i.e., what changed and why).